Assess and Troubleshoot Predictions

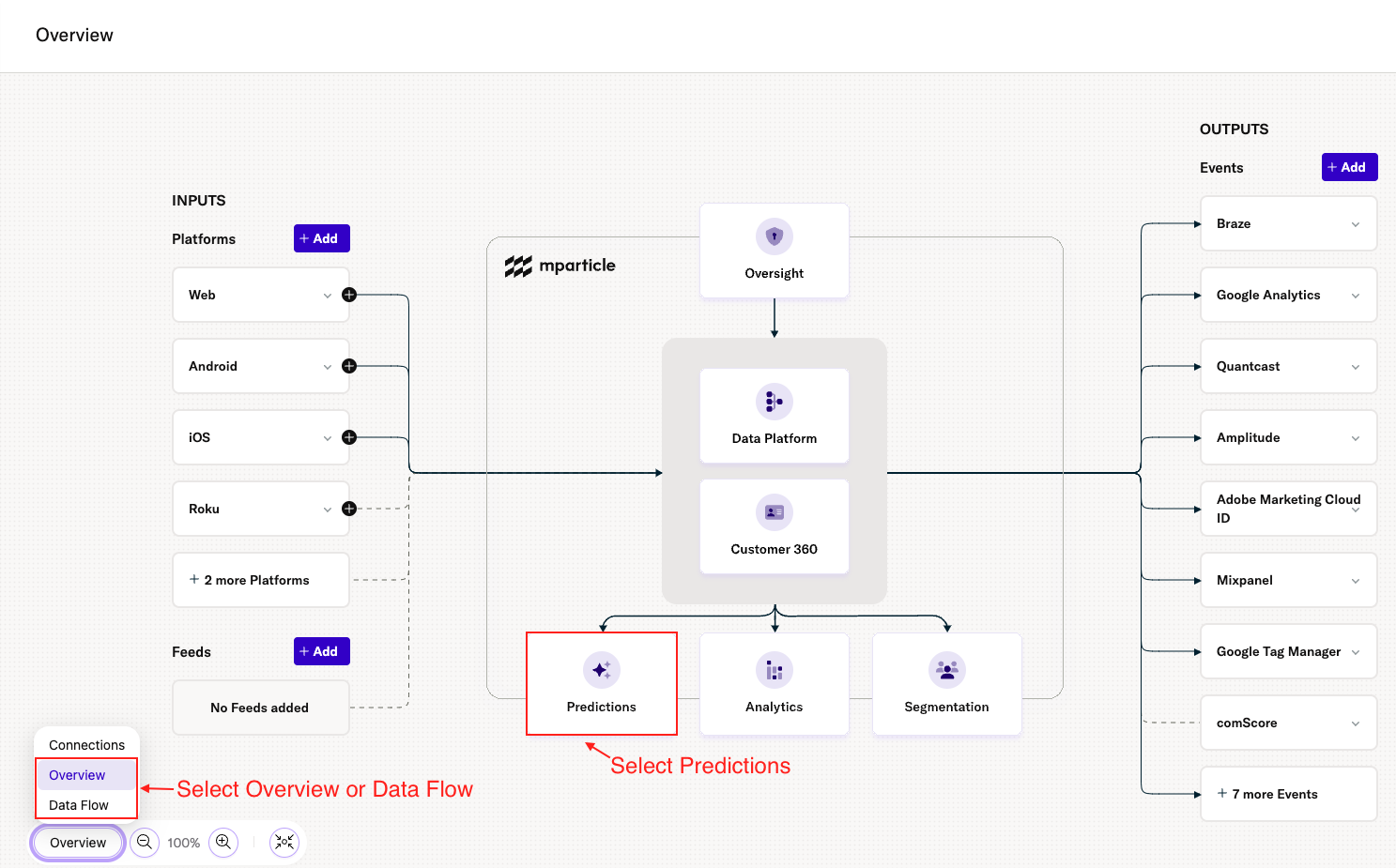

Once you are in the new mParticle UI:

- Select either the Overview or Data Flow view in the Overview Map.

- Select Predictions under the Customer 360 tile.

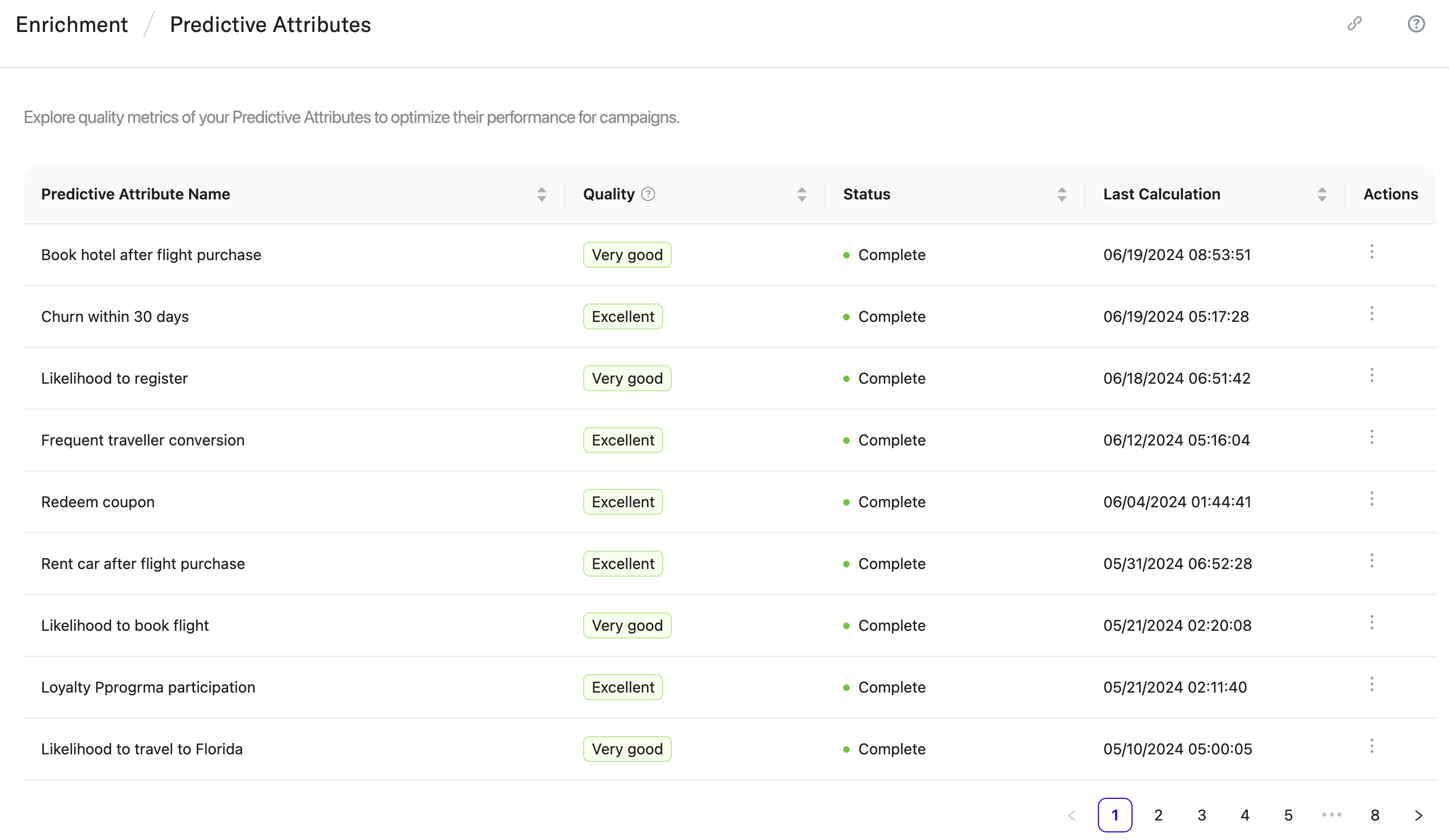

On the Predictions homepage, click View Predictive Attributes. This will direct you to Enrichment > Predictive Attributes, where you’ll find a table telling you about the quality of the Predictive Attributes you have created so far:

This table gives you three insights into each Predictive Attribute you have created:

Quality

Quality reflects the overall accuracy of your prediction. Each time you create a Predictive Attribute, Cortex sets aside a portion of your data to compare the predictions to real-world outcomes. The results of these tests are converted into a score that indicate how well the model performed.

The values in the Quality column correspond to the following scores:

- Excellent: 0.85 - 1.0

- Very Good: 0.75 - 0.85

- Good: 0.65 - 0.75

- Average: 0.55 - 0.65

- Below Average: 0.5 - 0.55

- Unknown: A quality score could not be determined.

The scores reflect what percentage of the predictions that the model generated aligned with what was observed in the real world. A score of 1.0, for instance, means that every prediction in the model was correct when compared with real-world data. Conversely, a score of 0.0 means that every prediction was incorrect.

Status

The current status of each prediction’s pipeline:

- Calculating: Cortex is in the process of generating predictions. Predictions that are currently calculating cannot be used in campaigns.

- Active: The prediction is current and ready to be used in a campaign.

-

Inactive: The prediction’s pipeline has not been run within the time window you specified, so the it has been deactivated. Predictions with a status of Inactive cannot be used in campaigns. To see this information and to reactivate the campaign, visit the Cortex UI.

- Failed: Cortex was unable to generate predictions for your specific users. This typically occurs when the number of users in your pipeline is too small. To diagnose and correct the problem, you will need to contact support.

Last Calculation

The time at which the most recent calculation was completed.

View prediction details

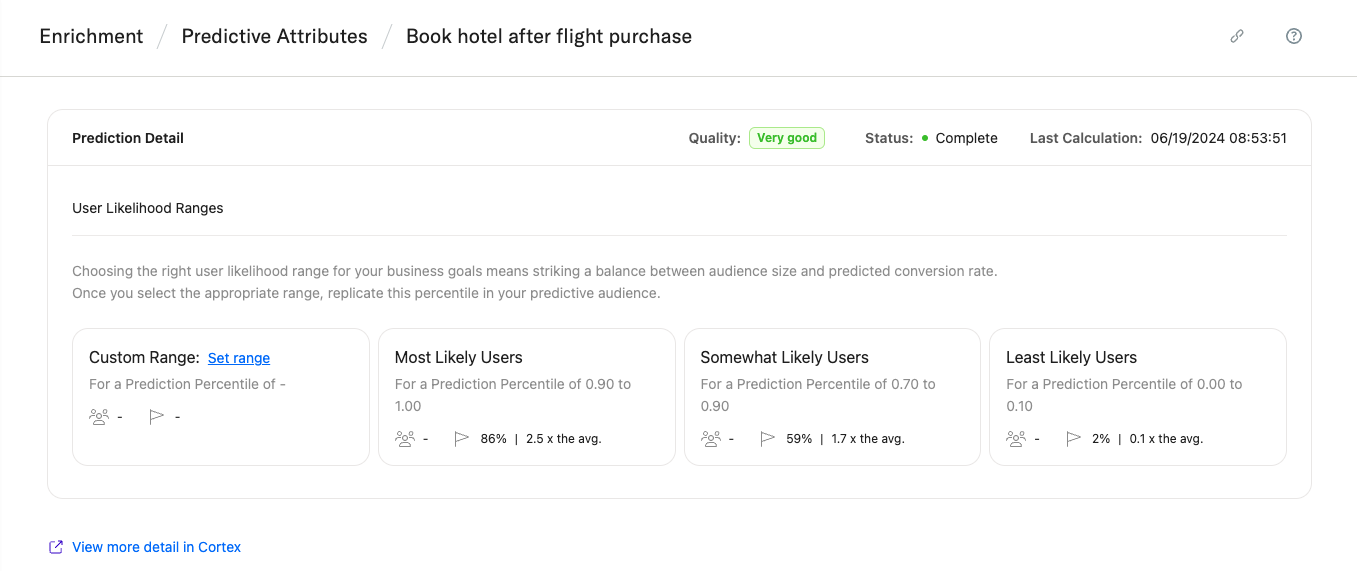

To view more information about a prediction, click the three vertical dots under Actions or the Predictive Attribute name. This will direct you to Enrichment / Predictive Attributes / {Name of Prediction}:

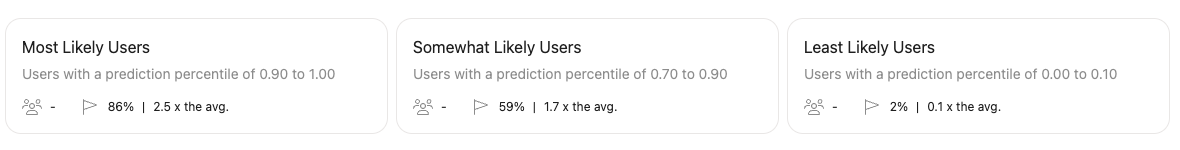

Here, you’ll see tiles displaying three preset User Likelihood Ranges, or subsets of users within this prediction grouped by their likelihood of conversion:

- Most Likely Users: 90th percentile and above. (More likely to convert than at least 90% of other users in the group.)

- Somewhat Likely Users: 70th - 90th percentile. (More likely to convert than at least 70% of other users, but less likely to convert than the top 10%..)

- Least likely users: 0th - 10th percentile. (Less likely to convert than at least 90% of users in the group.)

Each User Likelihood Range tile also displays:

- Total users in the range

- A predicted conversion rate for the range

- How likely users in this range are to convert relative to the average across the group

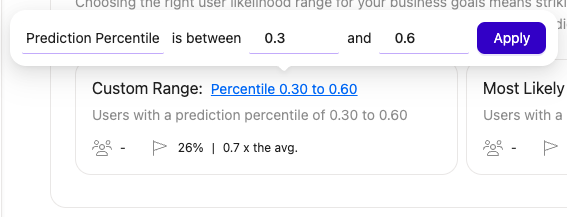

Custom Ranges

Using the Custom Range tile, you can specify a custom prediction percentile or fixed number of users. The tile will then display the total users, predicted conversion rate, and conversion likelihood relative to the average for the range specified:

Troubleshoot a failed pipeline

The most common reason for a prediction to fail is because there are not enough users in the pipeline, and therefore not enough real-world training examples for the model to generate accurate predictions. This can happen for a number of reasons, including:

- The selected conversion window is too short. The conversion time frame you select is the lookback time that Cortex will use to analyze which behaviors lead to conversion. If not enough users converted within this time frame, Cortex will not be able to draw meaningful connections and create accurate predictions. Consider selecting a longer conversion time frame to increase the number of users in the pipeline.

- Exclusion criteria are too stringent. While using exclusion criteria to narrow a prediction to a subset of users can help improve its accuracy, an overly restricted prediction can result in an inadequate number of total users and a failed pipeline. If you are using exclusion criteria in your prediction, consider loosening or eliminating certain conditions.

Was this page helpful?